Amazon SageMaker HyperPod reduces time to train

foundation models by up to 40% by providing purpose-built

infrastructure for distributed training at scale

Amazon SageMaker Inference reduces foundation

model deployment costs by 50% on average and latency by 20% on

average by optimizing the use of accelerators

Amazon SageMaker Clarify now makes it easier

for customers to evaluate and select foundation models quickly

based on parameters that support responsible use of AI

Amazon SageMaker Canvas capabilities help

customers accelerate data preparation using natural-language

instructions and model building using foundation models in just a

few clicks

BMW Group, Booking.com, Hugging Face,

Perplexity, Salesforce, Stability AI, and Vanguard among the

customers and partners using new Amazon SageMaker capabilities

At AWS re:Invent, Amazon Web Services, Inc. (AWS), an

Amazon.com, Inc. company (NASDAQ: AMZN), today announced five new

capabilities within Amazon SageMaker to help accelerate building,

training, and deployment of large language models and other

foundation models. As models continue to transform customer

experiences across industries, SageMaker is making it easier and

faster for organizations to build, train, and deploy machine

learning (ML) models that power a variety of generative AI uses

cases. However, to use models successfully, customers need advanced

capabilities that efficiently manage model development, usage, and

performance. That’s why most industry leading models such as Falcon

40B and 180B, IDEFICS, Jurassic-2, Stable Diffusion, and StarCoder

are all trained on SageMaker. Today’s announcements include a new

capability that further enhances SageMaker for scaling with models

by accelerating model training time. Another new SageMaker

capability optimizes managed ML infrastructure operations by

reducing deployment costs and latency of models. AWS is also

introducing a new SageMaker Clarify capability that makes it easier

to select the right model based on quality parameters that support

responsible use of AI. To help customers apply these models across

organizations, AWS is also introducing a new no-code capability in

SageMaker Canvas that makes it faster and easier for customers to

prepare data using natural-language instructions. Additionally,

SageMaker Canvas continues to democratize model building and

customization by making it easier for customers to use models to

extract insights, make predictions, and generate content using an

organization’s proprietary data. These advancements build on

SageMaker's extensive capabilities to help customers innovate with

ML at scale. To get started with Amazon SageMaker, visit

aws.amazon.com/sagemaker.

Recent advancements in ML, along with ready availability of

scalable compute capacity and the massive proliferation of data,

have led to the rise of models that contain billions of parameters,

making them capable of performing a wide range of tasks like

writing blog posts, generating images, solving math problems,

engaging in dialog, and answering questions based on a document.

Today, tens of thousands of customers like 3M, AstraZeneca,

Ferrari, LG AI Research, RyanAir, Thomson Reuters, and Vanguard are

using SageMaker to make more than 1.5 trillion inference requests

every month. In addition, customers like AI21 Labs, Stability AI,

and Technology Innovation Institute are using SageMaker to train

models with up to billions of parameters. As customers move from

building mostly task-specific models to the large, general-purpose

models that power generative AI, they work with massive datasets

and more complex infrastructure setups—all while optimizing for

cost and performance. Customers also want to build and customize

their own models to create unique customer experiences, embodying

the company’s voice, style, and services. With more than 380

capabilities and features added since the service was launched in

2017, SageMaker offers customers everything they need to build,

train, and deploy production-ready models at scale.

“Machine learning is one of the most profound technological

developments in recent history, and interest in models has spread

to every organization,” said Bratin Saha, vice president of

Artificial Intelligence and Machine Learning at AWS. “This growth

in interest is presenting new scaling challenges for customers who

want to build, train, and deploy models faster. From accelerating

training, optimizing hosting costs, reducing latency, and

simplifying the evaluation of foundation models, to expanding our

no-code model-building capabilities, we are on a mission to

democratize access to high-quality, cost-efficient machine learning

models for organizations of all sizes. With today’s announcements,

we are enhancing Amazon SageMaker with fully managed, purpose-built

capabilities that help customers make the most of their machine

learning investments.”

New capabilities make it easier and faster for customers to

train and operate models to power their generative AI

applications

As generative AI continues to gain momentum, many emerging

applications will rely on models. But most organizations struggle

to adapt their infrastructure to meet the demands of these new

models, which can be difficult to train and operate efficiently at

scale. Today, SageMaker is adding two new capabilities that help

ease the burdens of training and deploying models at scale.

- SageMaker HyperPod accelerates FM training at

scale: Many organizations want to train their own models using

graphics processing units (GPU)-based and Trainium-based compute

instances at low cost. However, the volume of data, size of the

models, and time required for training models has exponentially

increased the complexity of training a model, requiring customers

to further adapt their processes to deal with these emerging

demands. Customers often need to split their model training across

potentially hundreds or thousands of accelerators. They then run

trillions of data computations in parallel for weeks or months at a

time, which is time consuming and requires specialized ML

expertise. The number of accelerators and training time increases

substantially compared to training task-specific models, so the

likelihood of rare, small errors, like a single accelerator

failure, compounds. These errors can disrupt the entire training

process and require manual intervention to identify, isolate,

debug, repair, and recover from the issue, further delaying

progress. During the FM training process, customers are frequently

required to pause, evaluate ongoing performance, and make

optimizations to the training code. For uninterrupted model

training, developers have to continuously save training progress

(commonly known as checkpointing), so they don’t lose progress and

can resume training from where they last left off. These challenges

increase the time it takes to train a model, driving up costs and

delaying the deployment of new generative AI innovations. SageMaker

HyperPod removes the undifferentiated heavy lifting involved in

building and optimizing ML infrastructure for training models,

reducing training time by up to 40%. SageMaker HyperPod is

pre-configured with SageMaker’s distributed training libraries that

enable customers to automatically split training workloads across

thousands of accelerators, so workloads can be processed in

parallel for improved model performance. SageMaker HyperPod also

ensures customers can continue model training uninterrupted by

periodically saving checkpoints. When a hardware failure occurs

during training, SageMaker HyperPod automatically detects the

failure, repairs or replaces the faulty instance, and resumes the

training from the last saved checkpoint, removing the need for

customers to manually manage this process and helping them train

for weeks or months in a distributed setting without

disruption.

- SageMaker Inference reduces model deployment costs and

latency: As organizations deploy models, they are constantly

looking for ways to optimize their performance. To reduce

deployment costs and decrease response latency, customers use

SageMaker to deploy models on the latest ML infrastructure

accelerators, including AWS Inferentia and GPUs. However, some

models do not fully utilize the accelerators available with those

instances, leading to an inefficient use of hardware resources.

Some organizations also deploy multiple models to the same instance

to better utilize all of the available accelerators, but this

requires complex infrastructure orchestration that is time

consuming and difficult to manage. When multiple models share the

same instance, each model has its own scaling needs and usage

patterns, making it challenging to predict when customers need to

add or remove instances. For example, one model may be used to

power an application where usage can spike during certain hours,

while another model may have a more consistent usage pattern. In

addition to optimizing costs, customers want to provide the best

end-user experience by reducing latency. Because models outputs

could range from a single sentence to an entire blog post, the time

it takes to complete the inference request varies significantly,

leading to unpredictable spikes in latency if the requests are

routed randomly between instances. SageMaker now supports new

inference capabilities that help customers reduce deployment costs

and latency. With these new capabilities, customers can deploy

multiple models to the same instance to better utilize the

underlying accelerators, reducing deployment costs by 50% on

average. Customers can also control scaling policies for each model

separately, making it easier to adapt to model usage patterns while

optimizing infrastructure costs. SageMaker actively monitors

instances that are processing inference requests and intelligently

routes requests based on which instances are available, achieving

20% lower inference latency on average.

New capability helps customers evaluate any model and select

the best one for their use case

Today, customers have a wide range of options when choosing a

model to power their generative AI applications, and they want to

compare these models quickly to find the best option based on

relevant quality and responsible AI parameters (e.g., accuracy,

fairness, and robustness). However, when comparing models that

perform the same function (e.g., text generation or summarization)

or that are within the same family (e.g., Falcon 40B versus Falcon

180B), each model will perform differently across various

responsible AI parameters. Even the same model fine-tuned on two

different datasets could perform differently, making it challenging

to know which version works best. To start comparing models,

organizations must first spend days identifying relevant

benchmarks, setting up evaluation tools, and running assessments on

each model. While customers have access to publicly available model

benchmarks, they are often unable to evaluate the performance of

models on prompts that are representative of their specific use

cases. In addition, these benchmarks are often hard to decipher and

are not useful for evaluating criteria like brand voice, relevance,

and style. Then an organization has to go through the

time-consuming process of manually analyzing results, and repeating

this process for every new use case or fine-tuned model.

SageMaker Clarify now helps customers evaluate, compare, and

select the best models for their specific use case based on their

chosen parameters to support an organization’s responsible use of

AI. With the new capability in SageMaker Clarify, customers can

easily submit their own model for evaluation or select a model via

SageMaker JumpStart. In SageMaker Studio, customers choose the

models that they want to compare for a given task, such as question

answering or content summarization. Customers then select

evaluation parameters and upload their own prompt dataset or select

from built-in, publicly available datasets. For sensitive criteria

or nuanced content that requires sophisticated human judgement,

customers can choose to use their own workforce, or a managed

workforce provided by SageMaker Ground Truth, to review the

responses within minutes using feedback mechanisms. Once customers

finish the setup process, SageMaker Clarify runs its evaluations

and generates a report, so customers can quickly evaluate, compare,

and select the best model based on performance criteria.

New Amazon SageMaker Canvas enhancements make it easier and

faster for customers to integrate generative AI into their

workflows

Amazon SageMaker Canvas helps customers build ML models and

generate predictions without writing a single line of code. Today's

announcement expands on SageMaker Canvas’ existing, ready-to-use

capabilities that help customers use models to power a range of use

cases, in a no-code environment.

- Prepare data using natural-language instructions: Today,

the visual interface in SageMaker Canvas makes it easy for those

without ML expertise to do their own data preparation, but some

customers want a faster, more intuitive way to navigate their

datasets. Customers can now get started quickly with sample queries

and ask ad-hoc questions throughout the process to streamline data

preparation. Customers can also do complex transformations, using

natural-language instructions to fix common data problems like

filling in missing values in a column. With this new no-code

interface, customers can dramatically simplify how they work with

data on SageMaker Canvas, reducing time spent preparing data from

hours to minutes.

- Leverage models for business analysis at scale:

Customers use SageMaker Canvas to build ML models and generate

predictions for a variety of tasks, including demand forecasting,

customer churn prediction, and financial portfolio analysis.

Earlier this year, SageMaker Canvas made it possible for customers

to access multiple models on Amazon Bedrock, including models from

AI21 Labs, Anthropic, and Amazon, along with models from MosaicML

and TII and through SageMaker Jumpstart. With the same no-code

interface they use today, customers can upload a dataset and select

a model, and SageMaker Canvas automatically helps customers build

custom models to generate predictions immediately. SageMaker Canvas

also displays performance metrics, so customers can collaborate

easily to generate predictions using models and understand how well

the FM is performing on a given task.

Hugging Face is a leading machine learning company and open

platform for AI builders, offering open foundation models, and the

tools to create them. “Hugging Face has been using SageMaker

HyperPod to create important new open foundation models like

StarCoder, IDEFICS, and Zephyr, which have been downloaded millions

of times,” said Jeff Boudier, head of Product at Hugging Face.

“SageMaker HyperPod’s purpose-built resiliency and performance

capabilities have enabled our open science team to focus on

innovating and publishing important improvements to the ways

foundation models are built, rather than managing infrastructure.

We especially liked how SageMaker HyperPod is able to detect ML

hardware failure and quickly replace the faulty hardware without

disrupting ongoing model training. Because our teams need to

innovate quickly, this automated job recovery feature helped us

minimize disruption during the foundation model training process,

helping us save hundreds of hours of training time in just a

year.”

Salesforce is a leading AI customer relationship management

(CRM) platform, driving productivity and trusted customer

experiences powered by data, AI, and CRM. “At Salesforce, we have

an open ecosystem approach to foundation models, and Amazon

SageMaker is a vital component, helping us scale our architecture

and accelerate our go-to-market,” said Bhavesh Doshi, vice

president of Engineering at Salesforce. “Using the new SageMaker

Inference capability, we were able to put all our models onto a

single SageMaker endpoint that automatically handled all the

resource allocation and sharing of the compute resources,

accelerating performance and reducing deployment cost of foundation

models.”

Thomson Reuters is a leading source of information, including

one of the world’s most trusted news organizations. “One of the

challenges that our engineers face is managing customer call

resources during peak seasons to ensure the optimal number of

customer service personnel are hired to handle the influx of

inquiries,” said Maria Apazoglou, vice president of Artificial

Intelligence, Business Intelligence and Data Platforms at Thomson

Reuters. “Historical analysis of call center data containing call

volume, wait time, date, and other relevant metrics is time

consuming. Our teams are leveraging the new data preparation and

customization capabilities in SageMaker Canvas to train models on

company data to identify patterns and trends that impact call

volume during peak hours. It was extremely easy for us to build ML

models using our own data, and we look forward to increasing the

use of foundational models—without writing any code—through

Canvas.”

Workday, Inc. is a cloud-based software vendor specializing in

human capital management (HCM) and financial management

applications. “More than 10,000 organizations around the world rely

on Workday to manage their most valuable assets—their people and

their money,” said Shane Luke, vice president of AI and Machine

Learning at Workday. “We provide responsible and transparent

solutions to customers by selecting the best foundation model that

reflects our company’s policies around the responsible use of AI.

For tasks such as creating job descriptions, which must be high

quality and promote equal opportunity, we tested the new model

evaluation capability in Amazon SageMaker and are excited about the

ability to measure foundation models across metrics such as bias,

quality, and performance. We look forward to using this service in

the future to compare and select models that align with our

stringent responsible AI criteria.”

About Amazon Web Services

Since 2006, Amazon Web Services has been the world’s most

comprehensive and broadly adopted cloud. AWS has been continually

expanding its services to support virtually any workload, and it

now has more than 240 fully featured services for compute, storage,

databases, networking, analytics, machine learning and artificial

intelligence (AI), Internet of Things (IoT), mobile, security,

hybrid, virtual and augmented reality (VR and AR), media, and

application development, deployment, and management from 102

Availability Zones within 32 geographic regions, with announced

plans for 15 more Availability Zones and five more AWS Regions in

Canada, Germany, Malaysia, New Zealand, and Thailand. Millions of

customers—including the fastest-growing startups, largest

enterprises, and leading government agencies—trust AWS to power

their infrastructure, become more agile, and lower costs. To learn

more about AWS, visit aws.amazon.com.

About Amazon

Amazon is guided by four principles: customer obsession rather

than competitor focus, passion for invention, commitment to

operational excellence, and long-term thinking. Amazon strives to

be Earth’s Most Customer-Centric Company, Earth’s Best Employer,

and Earth’s Safest Place to Work. Customer reviews, 1-Click

shopping, personalized recommendations, Prime, Fulfillment by

Amazon, AWS, Kindle Direct Publishing, Kindle, Career Choice, Fire

tablets, Fire TV, Amazon Echo, Alexa, Just Walk Out technology,

Amazon Studios, and The Climate Pledge are some of the things

pioneered by Amazon. For more information, visit amazon.com/about

and follow @AmazonNews.

View source

version on businesswire.com: https://www.businesswire.com/news/home/20231129229217/en/

Amazon.com, Inc. Media Hotline Amazon-pr@amazon.com

www.amazon.com/pr

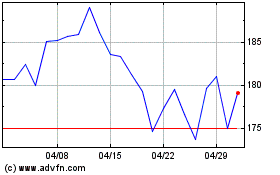

Amazon.com (NASDAQ:AMZN)

過去 株価チャート

から 6 2024 まで 7 2024

Amazon.com (NASDAQ:AMZN)

過去 株価チャート

から 7 2023 まで 7 2024